Your voice. Your pace.

Ideas don't arrive in perfect sentences.

They pause. They revise. They find their way.

Rift is built for how people actually think —

patient, precise, and ready when you are.

Ideas don't arrive in perfect sentences.

They pause. They revise. They find their way.

Rift is built for how people actually think —

patient, precise, and ready when you are.

Speak naturally. Rift transcribes.

You decide

when you're done.

No auto-endpointing

Speak. Pause. Think. Rift waits.

Other apps cut you off after 2 seconds of silence.

Others

"The quick brown—"

Cut off after pause

Rift

"The quick brown fox jumps over the lazy dog."

You press stop when ready

0ms

First-word capture

Your first word is never lost.

A 250ms lead-in buffer starts recording before you even finish pressing the button.

Buffered

Button pressed

Recording

"Hel—" is already captured

0s

Rolling context window

The model considers the last 25 seconds of audio.

It understands context, not just isolated words.

Live paste

Text appears in your app as you speak.

Real-time streaming with final reconciliation when you stop.

The quick brown fox jumps over the lazy dog.

Auto-fix

Hallucination detection

If the first transcription guess is wrong, Rift detects it and auto-replaces.

No manual cleanup. No re-recording.

Real-time

Streaming transcription

Audio is processed in chunks as you speak.

No waiting for you to finish.

Select text. Hear it spoken.

First word in

150 milliseconds.

0ms

First-word latency

You hear the first word before the sentence finishes generating.

No loading spinners. No waiting.

Seamless

Clause-level streaming

The next sentence is synthesized while the current one plays.

No gaps. No stutters. Continuous audio.

0ms

Audio poll rate

The audio buffer is checked every 20 milliseconds.

Imperceptible latency between chunks.

50 checks per second

Pause anywhere

Tap to pause mid-syllable. Tap again to resume from the exact position.

Your place is never lost.

Tap to pause

0.5× – 2×

Playback speed

Speed up for skimming. Slow down for comprehension.

Adjust in real-time without restarting.

Two pipelines. Zero cloud. Everything on your Mac.

Start dictation

Core Audio streams from your microphone with a 250ms lead-in buffer. Your first word is never lost.

Parakeet runs on the Neural Engine and GPU via MLX. 25 seconds of rolling context. Real-time streaming.

Text appears at your cursor as you speak. Final reconciliation when you stop. Hallucinations auto-fixed.

Speak selected text

Highlight text in any app or copy to clipboard. Rift reads whatever you give it.

Kokoro generates audio clause-by-clause. First word in 150ms. Next sentence ready before current ends.

Audio streams to system output. Pause anywhere, resume from exact position. 0.5× to 2× speed.

Your voice never leaves your Mac. Ever.

Zero file I/O

Audio is synthesized directly to memory. Nothing is written to disk. Nothing persists after you close the app.

Tested on real hardware. Real workloads.

M1 MacBook Air

M3 MacBook Pro

M4 Mac Studio

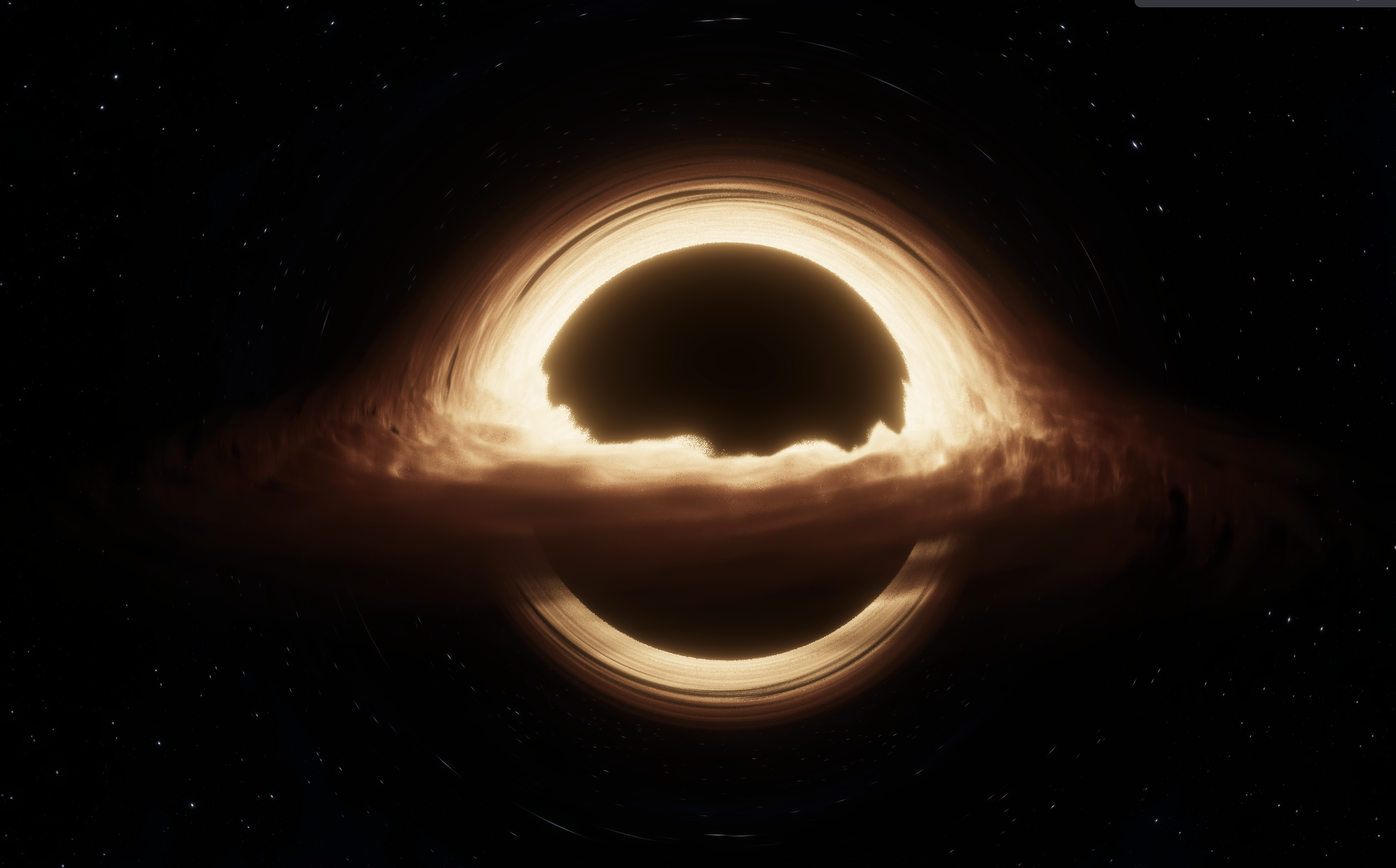

The visual metaphor

A black hole where your data goes in — and stays in.

Your Mac is the center of gravity. All processing happens here — voice recognition, text synthesis, everything. No servers. No cloud. One machine.

Your voice flows in like matter spiraling toward the event horizon. It gets captured, processed, transformed. The warm glow is energy being released as computation.

The point of no return — but in a good way. Once your words enter Rift, they never leave your machine. No telemetry, no uploads, no exceptions.

Just as light bends around a black hole, your voice bends into text. Text bends into voice. Transformation through the most powerful force — local compute.

Raymarching

Volumetric rendering via signed distance functions. The sphere-traced shader calculates 128 iterations per pixel to simulate photon paths.

Schwarzschild geodesics

Light follows the curved spacetime geometry of a non-rotating black hole. The photon sphere appears as a bright ring at 1.5× the event horizon radius.

Keplerian disk

Accretion disk particles orbit according to Kepler's laws. Inner particles orbit faster, creating the characteristic spiral structure.

ACES tonemapping

Film-industry-standard color grading compresses the HDR luminance into displayable range while preserving the fiery accretion glow.

Visualization based on Singularity by MisterPrada

Yes, 100%. Rift never connects to the internet. All processing happens locally on your Mac using the MLX framework.

Currently English only. The underlying Parakeet model supports multiple languages, and we're working on enabling them in future updates.

Not yet. Rift uses the Kokoro model's built-in voices. Custom voice cloning may be added in the future.

Never. Audio is processed in memory and discarded immediately. Nothing is written to disk or sent anywhere.

On first launch, Rift downloads and caches the ML models (~2GB). Subsequent launches are instant.

No. Rift requires Apple Silicon (M1 or later) for the MLX machine learning framework.

Yes. The full source code is available on GitHub under the MIT license.

Download the DMG, drag to Applications, and launch. Apple Silicon (M1+) required. If macOS shows a security warning, check the installation guide for a quick fix. First launch downloads ~2GB of ML models.

The Technology

Three technologies working together. All running locally on Apple Silicon. No cloud, no latency, no compromises.

The Foundation

Apple's machine learning framework. Runs entirely on your Mac's Neural Engine and GPU.

Voice to Text

NVIDIA's state-of-the-art speech recognition, optimized for Apple Silicon.

Text to Voice

Neural text-to-speech with natural-sounding voices. Real-time synthesis.

Your voice. Your Mac. Nothing else.

Download for macOSLoading version...